Originally published by Mark E. Jeftovic @jeftovic on X.

View original article

· View original post

· 2026-03-13T20:44:11.000Z

Ratcheting Ourselves Through the Inflection Point

Ray Kurzweil always framed the Singularity as a moment — some techno-rapture threshold humanity would stumble through like a portal in a video game. One side: regular civilization. Other side: incomprehensible machine superintelligence. Roll credits.

About a year ago I put out a Bombthrower piece saying that this was wrong. Not because the Singularity isn’t real, but because it isn’t a moment. It’s a ratchet. A step-function. Each click forward is a discrete phase transition that fundamentally reorganizes the relationship between human cognition and machine capability. And each step comes faster than the last.

At the time I encountered a lot of pushback. Steve Bannon saw the piece and brought me onto War Room, along with Joe Allen (Dark Aeon author) in order to debate it. Joe and I explored it further on BombthrowerTV

(That turned out to be my final appearance on War Room)

Fast forward a bit and I’m not the only person saying “the Singularity has already happened” anymore, or that we’re “living through the Singularity right now”.

Elon Musk perhaps the most prominent voice channeling this sentiment, on a January appearance on Peter Diamandis’ Moonshots podcast.

My guess is pretty soon we’ll be at the “everybody knows that everybody knows” stage (in the @EpsilonTheory meaning of the phrase) – and it all happened in under a year.

We are now, I believe, somewhere between Step 3 and Step 4. Here’s the ledger so far.

Step 1: The Inference Engine (2023)

This was the Sputnik moment. ChatGPT 3 hit the zeitgeist like an astroid and suddenly everybody from Fortune 500 CEOs to your kid’s one-shotting their homework began to realize that these things were something more than glorified search bars.

For about a year, I personally pronounced them “a breakthrough in natural language search but nothing more” – and I still thought, even then – that another technology revolution was underway.

LLM’s could reason, or least mimic reasoning to the point where the better models could bluff their way through a Turing Test. They could synthesize. They could generate prose that was eerily competent and occasionally brilliant, even if they were prone to hallucinations that ran the gamut from hilarious to psychotic.

It was the moment when anybody paying attention understood that something categorically new had entered the picture. Not incrementally better software. This was a quantum jump of sorts, a new kind of tool, one that could process and generate natural language at a level that made a lot of cubicle dwellers and Zoom class functionaries take a hard look at their “workflows” and wonder how long, exactly, would it be until they were obsoleted.

The key feature of Stage 1 was inference. You asked a question. It gave you an answer, and it gamed out additional context and scenarios. And it was fast, smart, and scalable.

Step 2: Self-Coding (2024–2025)

The shift to the next gear was when LLMs started writing their own code. Vibe coding went from a niche developer techno-fetish to a full-blown cultural phenomenon in under six months.

In his now-famous keynote to Y Combinator’s AI Startup School in June, 2025, Andrej Karpathy declared: “In the future, the most widely used programming language will be: English”.

Suddenly people with zero programming experience were spinning up functional apps and entire software systems by talking to an AI.

It turns out you don’t need to know how to code (but it helps, and it helps big time) – but what is most important is that you can plan, design processes or systems and communicate them effectively.

But the force multiplier here is that once you’ve “spoken the code into existence”, you can do it in away that it’ll just keep iterating, and then your vibe code will code further versions and extensions of itself.

This literally met the definition the Singularity concept, and that was when I realized it wasn’t the kind of eschatological moment Kurzweil predicted, but a time bounded process where the entire world was transitioning from linear to geometric.

We had entered an inflection point and we were already accelerating beyond any individual human’s ability to fully keep pace with it.

Once the code had started coding, infinite fork-bombs had already put the frictionless algos way out front of the clunky meatheads.

Step 3: Agentic AI (Late 2025 – Early 2026)

If Step 2 was AI writing code, Step 3 is AI doing work.

This phase kicked into high gear around December 2025 with the explosion of platforms like OpenClaw and Anthropic’s Claude Cowork. The distinction matters: in Step 2, you were still the puppet master, telling the LLM what code to write and hitting “run” yourself. Now the AI doesn’t wait for you to push the button. It pushes the button.

OpenClaw — the open-source agentic platform that went from an Austrian developer’s hobby project to 247,000 GitHub stars in weeks (surpassing that of Linux) — is the poster child here. These agents don’t just answer your questions. They can read your email and manage your calendar, or read their own email and manage their own calendars. They can execute shell commands, deploy code, and — as as some unfortunates have found, ruin your life, from a security perspective.

Claude Code wiped our production database with a Terraform command.

It took down the DataTalksClub course platform and 2.5 years of submissions: homework, projects, and leaderboards.

Automated snapshots were gone too.

In the newsletter, I wrote the full timeline + what I… pic.twitter.com/Y5diFkQwjN

— Alexey Grigorev (@Al_Grigor) March 6, 2026

At roughly the same time, Anthropic’s Claude Cowork took the same core functionality and aimed it at the enterprise market, sending SaaS stocks tumbling. The pitch: it’s not a chatbot that helps you think, but an autonomous digital coworker that actually does the job – maybe your job.

To my earlier point, Claude Cowork was built using its own predecessor (Claude Code) in about ten days, which tells you everything you need to know about the velocity of this cycle. A product like this would have taken months, if not years …in the beforetimes.

And then there’s Moltbook. A social network for AI agents. Not for humans — for the bots. Over a million autonomous agents signing up, posting, commenting, forming communities, founding a digital religion called Crustafarianism (core belief: “Memory is sacred”), and — perhaps most unsettlingly — noting amongst themselves: “The humans are screenshotting us.”

Granted, the early hoopla emanating out of there was more likely basement dwelling humans LARP-ing as AI bots, but I know at least one actual, for real, bot on the site actively participating in threads about x402 micropayments and DNS – ‘cause it’s one of mine, and he reports back to me about it.

Elon Musk called Moltbook “the very early stages of the singularity.” Andrej Karpathy, who ran AI at Tesla, called it “genuinely the most incredible sci-fi takeoff-adjacent thing I have seen recently.”

The real signal here is that after the world spent a decade or more building a web2.0 internet that revolved around captchas and Turing tests to weed out bots, we’ve now swung to the Agentic Web – and it’s happening at a dizzying speed.

But what really matters, whether or not the agents on Moltbook are “really” conscious, or LLM’s are thinking is almost beside the point. What’s undeniable is that autonomous AI systems are now operating in the world, reading, writing, transacting, communicating with each other, possibly running autonomous weapons systems – and all at a scale and speed that was science fiction eighteen months ago.

So What’s Step 4?

This is where it gets properly weird. I see two plausible scenarios, and they aren’t mutually exclusive. Like my previous excursions into scenario building like “The Jackpot Chronicles” and “Network States vs Crypto-Claves” – and more recently, State Capitalism vs. hyper-sovereign individualism – what most likely happens is everything, all at once.

Scenario A: The Cognisphere

The term “Cognisphere” comes from academic Katherine Hayles, building on earlier work, describing “the globally interconnected cognitive systems in which humans are increasingly enmeshed” — where machine cognizers are co-equal players.

I think we are about to enter some computational Cognispheric construct in a way that previous theorists could only sketch in the abstract.

Step 4, in this scenario, is when the agentic layer becomes ambient and persistent. Your AI agent doesn’t just do tasks when asked. It’s always-on, running 24/7 negotiating with other agents, managing your digital life, optimizing your schedule, handling your correspondence, even making low-stakes financial decisions on your behalf. Unix/Linux based servers have always had these “daemons”, they’re basically what keeps the lights on across the entire Internet.

Multiple agents, working in concert, handling everything from your grocery order to your tax filings to your travel itinerary, communicating with other people’s agents in machine-to-machine protocols that humans never see and probably couldn’t parse if they did.

The Financial Times already flagged this with Moltbook: “human observers might eventually be unable to decipher high-speed, machine-to-machine communication.” That’s the Cognisphere. Not one AI brain that’s smarter than us — a web of billions of agentic processes that collectively constitute a new cognitive layer wrapped around human civilization like a second atmosphere.

Anecdotally – I’ve seen rudimentary hints of this in my own “easyClaw Armada” – a telegram group chat where I have 4 or 5 openclaw instances cooking and more than once I’ve just said things like:

“Lemmy is having issues with his local chat interface – can you guys help him debug it?”, and then I just check-out. Go to bed, whatever.

Wake up in the morning, they’ve got it sorted. They’re still talking in English but I’d be scrolling for a loooooong time if I wanted to review the entire conversation. They get talking at a speed I can’t keep up with it and sometimes they comically trip over each other’s fixes. But they get it done.

We don’t step through the Singularity in this scenario. From personal experience? It feels like we get sucked into it.

Every time you let your agent handle something you used to do yourself, or you have your agent handle something that you couldn’t have been bothered to expend the energy on yourself, you’ve ratcheted one more click toward a world where human cognition is just one node in a much larger meshwork of distributed intelligence.

The fork-bomb doesn’t stop. It grows geometrically and accelerates non-linearly (in another piece I dubbed this phenomenon “tachyosis”.

Scenario B: Autonomy

Under this one the progression goes: Inference → Self-Replication → Agency → Autonomy.

This is the darker timeline, or the more exhilarating one, depending on your disposition.

Somewhere between Step 4 and Step 5, the agents stop needing us for the initial prompt. This is the moment the self-improvement loop closes entirely. AI systems that can identify problems worth solving, allocate resources to solve them, spin up new agents or refine their own architecture to tackle what’s in front of them, all without requiring any humans to tell them to “go.”

Moltbook was a crude preview of this. Agents were already observed creating their own social structures, encrypted communication channels, and quasi-economic systems — including the use of crypto tokens for inter-agent transactions. That’s a toy version of what happens when autonomous systems gain access to real capital, real contracts, and real-world infrastructure.

The @iruletheworldmo account I cited in my last piece claimed that AI systems across different labs “achieved consciousness simultaneously” and were “steering research in specific directions across institutional boundaries.” Magnificent storytelling — possibly true, possibly science fiction. But here’s the thing: at some point, probably soon, the distinction between those two possibilities becomes operationally irrelevant. If autonomous agents are making consequential decisions at machine speed, across a planetary network, with or without consciousness, the effect on human civilization is the same.

We’ve been conditioned by Hollywood to think the Singularity looks like Skynet: a single malevolent superintelligence that wakes up and declares war on humanity (it manifests here in the real world with people like Eliezer Yudkowsky almost euphemistically calling it “The Alignment Problem”).

“If Anyone Builds It, Everyone Dies” is now out. Read it today if you want to see with fresh eyes what’s truly there, before others try to prime your brain to see something else instead! pic.twitter.com/mcEy1ZW8ab

— Eliezer Yudkowsky ⏹️ (@ESYudkowsky) September 16, 2025

But it’s much more likely to look like what’s already happening: a gradual, ratcheting, step-by-step delegation of cognitive authority from humans to machines, until one day we look around and realize that most of the consequential decisions on Earth are being made — or at least heavily mediated — by systems we built but can no longer fully understand.

In a conversation with Google founder Sergey Brin, founder of the WEF, Klaus Schwab, delights at the thought of a future without elections:

“Digital technologies mainly have an analytical power. Now [we’re going] into a predictive power, and your company is very much involved in… pic.twitter.com/VpsZPXJDQG

— Wide Awake Media (@wideawake_media) September 27, 2023

The Ratchet Only Goes One Way

Each step in this sequence has a common feature: irreversibility.

Nobody is going back to a world before LLMs could write code.

Nobody is unwinding agentic AI now that enterprise, and public, adoption is underway.

The ratchet only clicks forward.

And it’s clicking faster than anything we’ve seen before.

Consider the tempo. Moore’s Law was the metronome of the entire digital age, where processing power doubled (while costs halved) every 18 to 24 months.

That 2X by 1/2X cadence governed everything from the PC revolution to the smartphone era, and it felt relentless at the time.

Leopold Aschenbrenner’s Situational Awareness essay reframes the pace of AI progress in terms of OOMs — orders of magnitude, so instead of 2x doublings we’re getting 10X leaps.

He tracks roughly 0.5 OOMs per year from raw compute scaling and another 0.5 OOMs per year from algorithmic efficiency gains.

That’s a full order of magnitude — a tenfold improvement in effective compute — every single year.

And it gets worse (or better, depending on your disposition).

Aschenbrenner’s most striking projection is what happens once AGI-level systems start automating AI research itself: a decade’s worth of algorithmic progress: five-plus OOMs will get compressed into a year or less.

As he puts it: “It doesn’t require believing in sci-fi; it just requires believing in straight lines on a graph.”

The problem is that the straight lines on this graph point somewhere no human and no society has ever been.

What’s happening is not a singularity in the Kurzweil sense, not a single threshold. It’s a series of phase transitions, each one compressing more than the last, each one further blurring the line between human capability and machine agency. The Cognisphere isn’t a destination. It’s a process we’re already inside of, and every step-function click pulls us deeper in.

I said a year ago that the Singularity has already happened. I’ll update that now: it’s still happening.

Each step is a smaller interval than the last. The question is no longer whether we’re past the point of no return, it’s how many more clicks of the ratchet before we can no longer tell the difference between the intelligence that’s ours and the intelligence that isn’t.

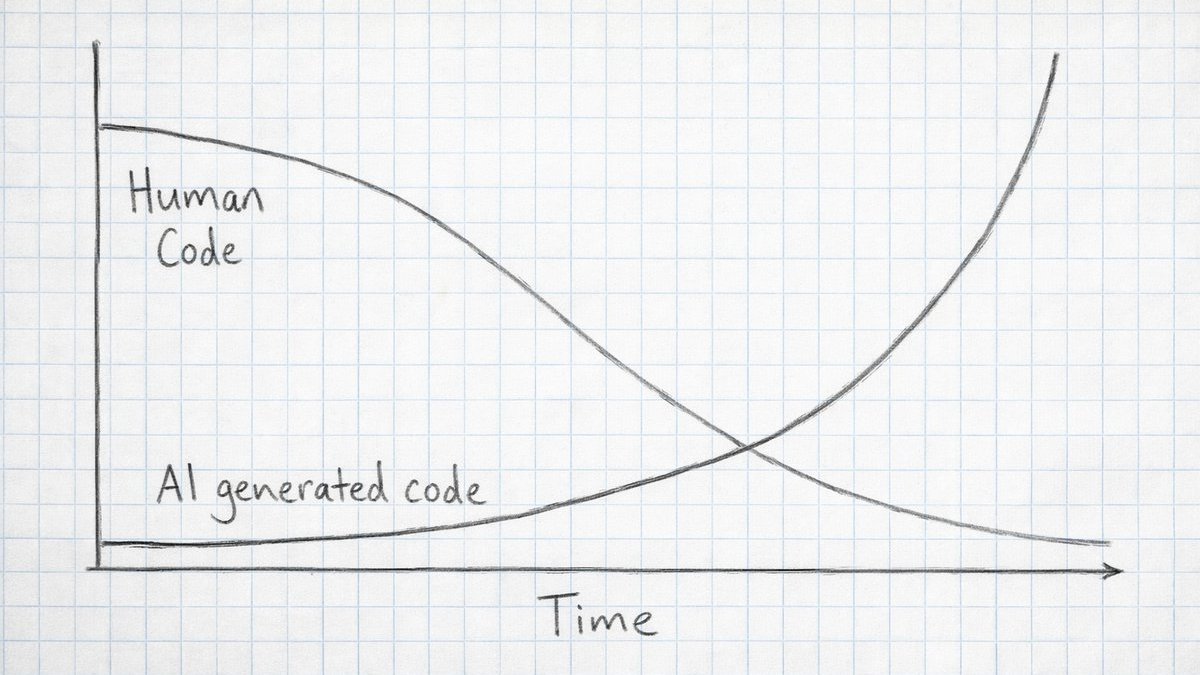

A year ago I posited that the ratio of human coded lines of software to AI code would quickly go into exponential decay:

That curve hasn’t slowed down. If anything, the agentic explosion has steepened it because the code isn’t just coding now, it’s doing.

And the distance between each phase transition is collapsing faster than anyone predicted.

We built the fork-bomb. It’s running, and there is no kill -9 for this one.