Originally published by darkzodchi @zodchiii on X.

View original article

· View original post

· 2026-03-07T12:59:10.000Z

You make roughly 35,000 decisions a day.

What to eat. Whether to reply to that email now or later. Take the highway or side streets. Accept the job offer or negotiate. Hold the stock or sell.

I spent 3 months deciding whether to move to Paris by making pro/con lists in my head at 2am. Never wrote anything down. Just vibes. Moved. Hated it within a week

And for small stuff: what to have for lunch, which Netflix show to watch, vibes are fine. Who cares.

But for the decisions that actually shape your life? Career moves, investments, relationships, health? Vibes are a disaster. And I can prove it with math.

This isn’t a math lecture btw. No calculus. I promise. This is about 6 mental models that changed how I think about literally everything – and once you see them you can’t unsee them.

Fair warning: some of this will make you uncomfortable. You’ll realize you’ve been making bad decisions for years. I know I did.

1. Expected Value — the one formula that runs everything

Ok so this is the big one. If you learn nothing else from this article, learn this.

Any decision you make has possible outcomes. Each outcome has a probability and a payoff. Multiply them together, add them up, and you get the expected value.

EV = Σ (probability × payoff)

That’s it. That’s the formula. I started thinking about this after I endless rekt opportunities in crypto space over last 5 years of my experience.

Your friend offers you a bet. Flip a coin. Heads, he pays you $150. Tails, you pay him $100.

Most people hesitate. “I could lose $100!” And yeah, you could. But run the math:

EV = (50% × $150) + (50% × -$100)

= $75 – $50

= +$25

Every time you take this bet, you make $25 on average. If someone offered you this 100 times you’d be insane to say no. You’d make roughly $2,500.

But here’s the weird thing – in studies, most people reject this bet. Even when the math screams YES. Because humans feel losses about 2x more than equivalent gains. Losing $100 hurts way more than winning $150 feels good. Psychologists call this loss aversion and it screws up almost every decision you make.

Real world example. Your boss offers you two paths:

Option A: Stay in your current role. Guaranteed $120K salary.

Option B: Take a riskier role at a startup (just an example). 60% chance it works and you make $250K in 2 years (equity + salary). 40% chance it fails and you make $70K for the same period.

Most people pick A. Feels safe. Let’s check:

EV of A = $120K (guaranteed)

EV of B = (0.60 × $350K) + (0.40 × $70K)

= $150K + $28K

= $178K

Option B is worth $58K more. But 40% chance of “only” $70K feels terrifying so people don’t take it.

I’m not saying blindly chase every positive EV opportunity. Variance matters. If losing the bet means you can’t pay rent, don’t take it even if EV is positive. But at least KNOW the EV before you decide. Most people don’t even calculate it. They just go with their gut and wonder why they’re stuck.

2. Base Rate Neglect — the reason you’re wrong more than you think

This one is sneaky because it feels so counterintuitive.

Let’s say there’s a disease that affects 1 in 1,000 people. You take a test that’s 99% accurate. It comes back positive.

What’s the probability you actually have the disease?

If you said 99%… you’re wrong. And you’re in good company — most doctors get this wrong too.

Let’s think about it. Out of 1,000 people:

1 actually has the disease. The test catches them (99% accurate). That’s 1 true positive.

999 don’t have it. But the test has a 1% false positive rate. That’s ~10 false positives.

So there are 11 positive results total. Only 1 is real.

P(actually sick | positive test) = 1/11 ≈ 9 percent

Not 99%. Nine percent. The base rate (1 in 1,000) matters enormously, and people ignore it every single time.

How this ruins real decisions:

- “My startup idea is great – look at how well Uber did!” Base rate of startup success: ~6%. Base rate of becoming a unicorn: 0.00006%. I have been trying to do my own startups since 2017. Most of my friends had as well (whose parents were way richer). Thought I was the exception. I wasn’t.

- “This investment returned 40% last year, I should buy more.” Base rate of any fund beating the market 3 years in a row: ~15%. Last year’s performance tells you almost nothing about next year.

- “This guy on Twitter predicted the crash, he must be a genius.” Base rate: if 10,000 people make random predictions, ~100 will nail it perfectly. They are not geniuses. They’re survivors of a large sample.

Whenever someone tells you about a specific success story, ask yourself: what’s the base rate? How often does this type of thing actually work? If the answer is “rarely” — be very skeptical, no matter how convincing the story sounds.

3. Sunk Cost Fallacy — throwing good money after bad (and not just money)

You bought a movie ticket for $15. Thirty minutes in, the movie is terrible.

Do you leave or stay?

Economically, the answer is obvious: leave. The $15 is gone either way- staying doesn’t get it back. The only question is: “Will the next 90 minutes be better spent watching this bad movie or doing literally anything else?”

But almost everyone stays. “I already paid for it.”

That’s the sunk cost fallacy. And it wrecks people’s lives in ways far worse than bad movies.

- Staying in a dead-end job for 3 more years because “I already invested 5 years here.” Those 5 years are gone. The only question is: what’s the best use of the NEXT 3?

- Holding a losing stock or any altcoin / memecoin because “Already down 50%, I can’t sell now.” The stock doesn’t know your entry. The market doesn’t care what you paid. The only question is: if you had cash right now, would you buy this stock at today’s price?

- Staying in a relationship because “we’ve been together for 6 years.” Those 6 years happened. The only question is whether the NEXT 6 will be good.

The fix is aggressively simple: when making any decision, pretend you’re starting from scratch right now. Ignore everything you’ve already spent – time, money, emotion. Only look forward. Would you choose this path today, knowing what you know?

If the answer is no, get out. The past is not coming back regardless.

4. Bayesian Thinking — how to update your beliefs like a scientist (not a politician)

Most people form an opinion and then defend it forever. New evidence comes in? Doesn’t matter – they already decided.

This is the opposite of how you should think.

Bayes’ theorem gives you the mathematically correct way to change your mind:

P(belief | new evidence) = P(evidence | belief) × P(belief) / P(evidence)

Looks scary. It’s not. Let me translate it to English:

Your updated belief = how well the evidence fits your theory × how likely your theory was before ÷ how common the evidence is in general.

Example. You think your coworker is going to quit. Before any evidence, you’d say there’s a 10% chance (most people don’t quit in any given month). That’s your prior.

Then you notice she updated her LinkedIn profile. Hm.

- If she IS about to quit, what’s the probability she’d update LinkedIn? Pretty high — say 70%.

- If she’s NOT about to quit, what’s the probability she’d update LinkedIn? People do it randomly all the time — say 15%.

P(quitting | LinkedIn update) = (0.70 × 0.10) / ((0.70 × 0.10) + (0.15 × 0.90))

= 0.07 / (0.07 + 0.135)

= 0.07 / 0.205

≈ 34%

One piece of evidence moved you from 10% to 34%. Not to 90% -that would be overreacting. Not staying at 10% – that would be ignoring evidence. 34% is the mathematically rational update.

Now she also starts taking calls outside the office. Another update. Maybe goes to 55%. She’s suddenly vague about long-term projects. 70%.

Each piece of evidence nudges the probability. Gradually. Proportionally. No drama. No sudden flipping between “definitely yes” and “definitely no.”

This is how prediction markets work btw. The price of a contract IS the Bayesian posterior- constantly updating as new information flows in. That’s why Polymarket predicted the Iran strikes before CNN. Thousands of people feeding tiny pieces of evidence into a price. Each one nudging it 0.5% this way or 2% that way. The aggregate is spookily accurate.

The takeaway: hold opinions loosely. Update them constantly. The strength of your update should be proportional to the strength of the evidence. Most people either ignore evidence entirely or overcorrect wildly. Both are wrong.

5. Survivorship Bias — you’re only seeing the winners

You read about a college dropout who built a billion-dollar company. Inspiring, right? Maybe college is a waste of time?

Except you never hear about the 10,000 other dropouts who are broke. They don’t get magazine covers. They don’t get podcast interviews. They just quietly struggle while one survivor gets all the attention.

This is survivorship bias. You only see the winners, so you massively overestimate the probability of winning.

It’s everywhere:

- Crypto Twitter. Everyone posting gains. Nobody posting losses. The guy who turned $500 into $50K gets 200K likes. The 500 guys who turned $500 into $0 deleted their accounts. Your feed makes it look like everyone’s winning. They’re not. 87% of Polymarket wallets lose money. You just never see them post about it.

- Restaurant advice. “Just follow your passion and open a restaurant!” 60% of restaurants close within the first year. 80% close within five. The ones that survive become your favorite spots. The dead ones are invisible. Following your passion is great advice if you ignore the graveyard.

- Music industry. “Just upload to Spotify and go viral!” 90,000 tracks are uploaded to Spotify every single day. The median track gets 30 plays total. You hear about the ones that go viral because… they went viral. The other 89,990 are silence.

The fix: whenever someone shows you a success story as proof that something works, look for the denominator. How many people tried this? What percentage succeeded? If you can’t find the denominator, assume the success rate is very low. Because the world only shows you numerators.

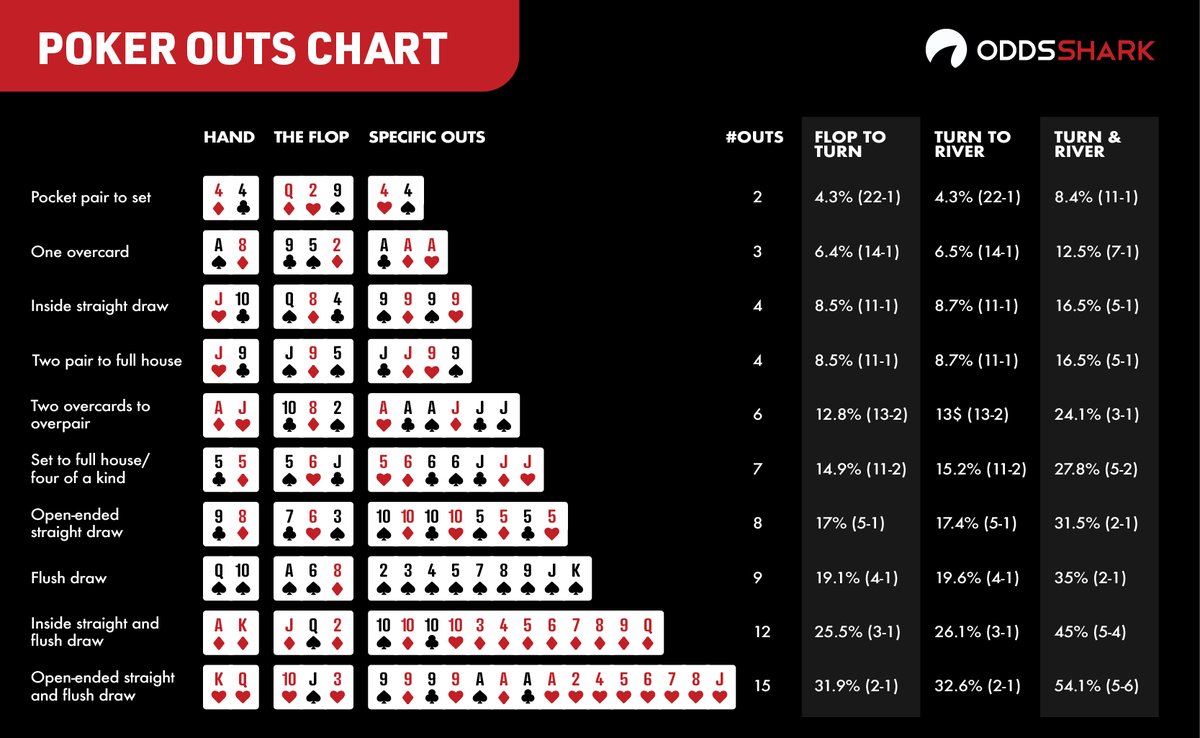

6. The Kelly Criterion — how much to bet when you DO have an edge

Ok so let’s say you’ve done everything above. You calculated the EV. Checked the base rate. Updated your beliefs with Bayes. Accounted for survivorship bias. And you’ve found a genuinely good opportunity.

How much should you bet?

Most people have two modes: too much or too little. Either they yolo everything because they’re “confident” or they put in a tiny amount because they’re scared. Both are wrong and there’s a formula for exactly the right amount.

f* = (p × b – q) / b

p = probability of winning (your honest estimate)

q = probability of losing (= 1 – p)

b = how much you win per dollar risked

Example. You find a bet where you think you have a 60% chance of winning. If you win, you double your money (b = 1).

f* = (0.60 × 1 – 0.40) / 1 = 0.20

Kelly says: bet 20% of your bankroll.

But here’s what 50 years of real-world application has taught us: full Kelly is way too aggressive for humans. The variance will destroy you emotionally long before the math pays off. Every professional gambler and trader I’ve studied uses quarter-Kelly to half-Kelly.

So instead of 20%, bet 5-10%. It’s not as exciting. You won’t get rich tomorrow. But you also won’t blow up next week.

This applies to everything btw. Not just money:

- Career: Don’t quit your job to go all-in on a side project. Reduce to 4 days/week and spend 1 day on the project. That’s ~quarter-Kelly.

- Learning: Don’t try to learn 5 new skills at once. Pick the one with the highest EV and go deep.

- Relationships: Don’t spread yourself thin across 20 shallow friendships. Invest deeply in 4-5 people who actually matter.

The principle: when you have edge, concentrate. But not too much. Leave room to be wrong.

How all 6 connect (and why prediction markets are the ultimate training ground)

These aren’t random math tricks. They’re a complete system for thinking clearly:

- EV tells you whether to act

- Base rates ground your estimates in reality

- Sunk costs tell you what to ignore

- Bayes tells you how to update

- Survivorship bias tells you what’s missing from the picture

- Kelly tells you how much to commit

Prediction markets like Polymarket are weirdly the best training ground for all 6. Every contract forces you to assign a probability. The market price shows you the crowd’s probability. If you disagree — and you have a reason to — you trade. Your P&L tells you whether your thinking was right.

It’s like a gym for your decision-making. Except instead of reps, you’re placing bets. And instead of soreness, you get a wallet balance that doesn’t lie.

The top 1.2% of Polymarket traders I analyzed? They all think this way. Not because they read the same books — because the market beat it into them. Make a bad probability estimate, lose money, learn. Repeat 500 times. That’s the curriculum.

The uncomfortable part

Here’s what bugged me the most when I first learned this stuff.

I realized I have been making the WRONG decision on basically everything important in my life. Stayed in a job way too long (sunk cost). Didn’t negotiate my salary because “they might say no” (loss aversion + wrong EV calculation). Followed crypto influencers because they had one big win (survivorship bias). Changed my mind too fast on some things, too slow on others (bad Bayesian updating). Spread myself across too many projects (anti-Kelly).

And the worst part? I thought I was being rational the whole time. I had “reasons” for every decision. They just happened to be the wrong reasons that my brain invented to justify what my gut already decided.

That’s the trap. You don’t feel irrational when you’re being irrational. You feel certain. The certainty IS the bug.

The 6 models above don’t make you perfect. Nothing does. But they give you a structured way to check your own thinking. Like a pilot going through a pre-flight checklist — not because they don’t know how to fly, but because even experts miss things when they rely purely on instinct.

Start using them. Start with one. Next time you face a real decision — career, money, relationship, whatever — pause for 5 minutes and run through the EV calculation. Just that one thing.

You’ll be shocked how different the answer is from what your gut tells you.

Reading list (if you want to go deeper)

In order of how much they changed my thinking:

- Thinking, Fast and Slow – Daniel Kahneman. The Bible. Covers almost everything in this article and 200 more biases you didn’t know you had.

- Superforecasting – Philip Tetlock. How the world’s best predictors think. Directly applicable to prediction markets.

- The Signal and the Noise –Nate Silver. How to find real patterns and ignore fake ones.

- Fooled by Randomness -Nassim Taleb. Why most “skill” is luck in disguise.

- Fortune’s Formula – William Poundstone. The wild history of Kelly criterion. Reads like a thriller.

- Annie Duke – Thinking in Bets. Poker player turned decision consultant. Very practical.

One more thing.

The fact that you read to the end of an article about expected value and Bayesian updating puts you in a tiny minority. Most people scroll past anything with a formula. You didn’t.

That is your edge. Not information – everyone has access to the same books and formulas. The edge is actually caring enough to learn this stuff and apply it.

Now go make a better decision than you would have made an hour ago.

Thanks for reading!

Hi, there should be 250k instead of 350k in calculation

Real world example. Your boss offers you two paths:

Option A: Stay in your current role. Guaranteed $120K salary.

Option B: Take a riskier role at a startup (just an example). 60% chance it works and you make $250K in 2 years (equity + salary). 40% chance it fails and you make $70K for the same period.

Most people pick A. Feels safe. Let’s check:

EV of A = $120K (guaranteed)

EV of B = (0.60 × $350K) + (0.40 × $70K)

= $150K + $28K

= $178K