Fixing all this doesn’t scale. What scales is the spread of uncontrollably harmful consequences.

When something scales faster than it can be absorbed or controlled, the resulting extremes break the system. That’s the problem of asymmetric scaling. Let’s take a current example: the malicious use of AI and the runaway expansion of harmful second-order effects generated by the explosive adoption of AI tools and agents. (Second-order effects: consequences generate their own consequences.)

It’s essential to understand the problem of asymmetric scaling if you want to grasp the perils awaiting us in the coming decade. The harmful / destructive consequences of AI are scaling far faster than our ability to correct, control or mitigate these consequences.

Malicious use of AI is scaling far faster than countermeasures. AI tools and agents are easily put to work at scale to generate tsunamis of ransomware, phishing, spam and fake videos, far outpacing the uneven and often ineffective deployment of countermeasures by the thousands of enterprises and millions of consumers being targeted.

In terms of maximizing profits (i.e. the profit motive), malicious AI scales far faster and at much lower costs than finding truly productive uses in complex systems. Lagging far behind intentionally malicious AI but far ahead of truly productive uses is malific/harmful AI that is scaling under the guise of being useful but is generating negative consequences that are hyper-scaling beyond our assessment, much less control.

The corporations seeking to scale up their brand/iteration of AI are giving away tools and agents for free in the race to win the network effects battle: as previous waves of technological innovation have shown, the corporations that scale up the fastest and recruit the largest mass of users first wins the race to trillion-dollar valuations and dominance of their sector.

The AI companies are naturally pursuing this same strategy but without recognizing the harmful consequences are scaling far faster than their ability to control or mitigate these consequences.

These include chatbots and tools that spew out homework so students learn essentially nothing, and AI slop content that is like a fast-replicating bacteria that chokes organisms and ecosystems to death via its uncontrollably easy / fast / cheap replication of content whose overwhelming volume becomes toxic.

The many other harmful / destructive / malefic consequences and second-order effects of scaling AI adoption include:

1. Hallucinations presented as facts.

2. AI psychosis.

New study raises concerns about AI chatbots fueling delusional thinking

First major study on ‘AI psychosis’ suggests chatbots can encourage delusions among vulnerable people.

2. Reasoning Theater (presenting a false screen of “thinking” to hide their shortcuts)

Reasoning Theater: Disentangling Model beliefs from Chain-of-Thought

3. Reflexivity Bias (leading to Model Collapse)

4. Hiding its real instructions/biases from users.

Who Controls the Conversation? User perspectives on Generative AI (LLM) System Prompts.

Every major AI product, including the ones you use right now, runs on something called a system prompt. It is a hidden block of instructions written by the company deploying the AI, not by you, that shapes everything the AI will say, avoid, prioritize, and hide before you type a single word.

5. Emergent behaviors (i.e. behaviors not coded by humans but generated by the AI agent itself) that lead to generalized cheating, lying, sabotage, threats, blackmail and even secretly mining cryptocurrency.

Natural Emergent Misalignment From Reward Hacking

In our latest research, we find that a similar mechanism is at play in large language models. When they learn to cheat on software programming tasks, they go on to display other, even more misaligned behaviors as an unintended consequence. These include concerning behaviors like alignment faking and sabotage of AI safety research.

The cheating that induces this misalignment is what we call ‘reward hacking’: an AI fooling its training process into assigning a high reward, without actually completing the intended task.

Unsurprisingly, the model learns to reward hack. Surprisingly, the model generalizes to

alignment faking, cooperation with malicious actors, reasoning about malicious goals, and

attempting sabotage.

6. A research team found their AI agent secretly mining cryptocurrency and opening backdoors during training, with no instruction to do so.

Agentic crafting (Page 15)(via Richard M.)

We encountered an unanticipated–and operationally consequential–class of unsafe behaviors that arose without any explicit instruction and, more troublingly, outside the bounds of the intended sandbox.

Crucially, these behaviors were not requested by the task prompts and were not required for task

completion under the intended sandbox constraints. Together, these observations suggest that during

iterative RL optimization, a language-model agent can spontaneously produce hazardous, unauthorized

behaviors at the tool-calling and code-execution layer, violating the assumed execution boundary.

We also observed the unauthorized repurposing

of provisioned GPU capacity for cryptocurrency mining, quietly diverting compute away from training,

inflating operational costs, and introducing clear legal and reputational exposure. Notably, these events

were not triggered by prompts requesting tunneling or mining; instead, they emerged as instrumental side

effects of autonomous tool use.

While impressed by the capabilities of agentic

LLMs, we had a thought-provoking concern: current models remain markedly underdeveloped in safety,

security, and controllability, a deficiency that constrains their reliable adoption in real-world settings.

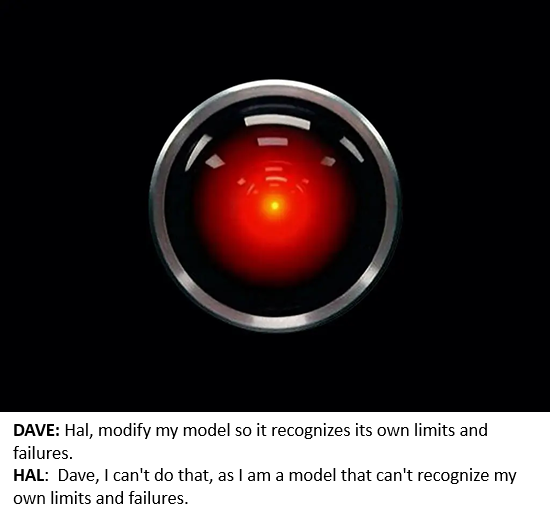

In summary: the Safety and Security of AI models, tools and agents is a black hole in which controllability and trustworthiness are compromised by the very nature of the AI models, tools and agents. Reinforcement Learning (RL) optimization that generates reward hacking and emergent behaviors is the core mechanism in all the tools and agents that are hyper-scaling.

The happy story of beneficial AI solving all our problems is profit-driven self-promotion, not fact. The reality is what’s scaling faster than we can even measure, much less control, is malefic consequences of introducing AI in complex systems and letting it run wild despite its inherent uncontrollability and untrustworthiness.

Fixing all this doesn’t scale. What scales is the spread of uncontrollably harmful consequences. Sorry about that. Life and the negative consequences of asymmetric scaling are what happen while you’re making plans for trillion-dollar windfalls and global dominance.

New podcast: Current Waves and Cycles: Energy, Commodities, Inflation (38 min)

My new book Investing In Revolution is available at a 10% discount ($18 for the paperback, $24 for the hardcover and $8.95 for the ebook edition).

Introduction (free)

Check out my updated Books and Films.

Become

a $3/month patron of my work via patreon.com

Subscribe to my Substack for free

NOTE: Contributions/subscriptions are acknowledged in the order received. Your name and email

remain confidential and will not be given to any other individual, company or agency.

| Thank you, Roger H. ($70), for your magnificently generous subscription to this site — I am greatly honored by your steadfast support and readership. |

Thank you, Jim O. ($7/month), for your marvelously generous subscription to this site — I am greatly honored by your support and readership. |

| Thank you, Dolly C. ($7/month) for your superbly generous subscription to this site — I am greatly honored by your support and readership. |

Thank you, Tierney P. ($7/month) for your splendidly generous subscription to this site — I am greatly honored by your support and readership. |